Hey Bot! Give Me a Headline

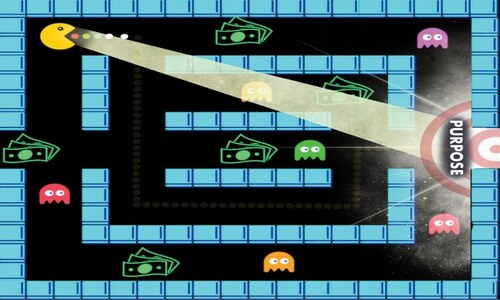

Artificial Intelligence is not a new concept. It has been part of our lives for a while in the form of Siri and Alexa, not to mention virtual agents on e-commerce sites and even speech recognition. So why this sudden anxiety about AI taking over our jobs (possibly the world as predicted in Hollywood sci-fi movies) one profession at a time?

The one-word answer: chatbots.

Although you may not be familiar with chatbots, you must have – either in passing or in an in-depth animated discussion – heard of ChatGPT (Generative Pre-Trained Transformer).

The Oxford Dictionary’s definition of a chatbot is “a computer programme designed to simulate conversation with human users.”

Basically what they do is engage with us in human-like conversations to help us with multiple tasks, including, but not limited to, summarising long documents, coming up with ideas for a marketing campaign, and writing essays with citations. (For the most accurate idea of what it can do, I highly recommend using ChatGPT if you haven’t already.)

Almost every profession across the globe, from lawyers and teachers to designers and marketers, is figuring out whether chatbots are friends or foes. Journalists in Pakistan are attempting to do the same – and given that the industry is perpetually going through financial crunches, it is not surprising that most are worried that these faceless bots with ace English could cost them their jobs.

I, like many, set out to experiment (admittedly a little sceptically) with ChatGPT and posed a rather open-ended question to it. “What is wrong with Imran Khan?”

Ask this question in a newsroom and there are bound to be raised eyebrows, a few smirks and lengthy debates on the question itself. The AI, rightfully, said it has no personal biases or opinions. It, however, did list some of the most widely dished out criticisms of the PTI-led government. But when asked for sources it started to fall apart. By that, I don’t mean that it couldn’t back up the points made with news articles. In fact, it produced multiple links, but they would not work. When repeatedly told that the links were broken, all the polite bot could do was apologise and insist I check again. Alarmingly, I discovered after some back and forth that the articles themselves did not exist. Now, in real-time in the newsroom, you are not given the bandwidth to go chasing after broken links – unless you suspect foul play, which would be a theory that a human, not a bot without opinions, could come up with and look into.

I will not delve into my other interactions with the bot, but simply say that my time with the Microsoft-backed Open AI’s ChatGPT told me that the AI as it stands now is no match for humans. However, to gain a wider perspective, I reached out to fellow editors who have been trained to think for themselves and constantly remind their teams, amid the onslaught of press conferences and WhatsApp tickers, that journalists are not mere transcribers.

Here are some thoughts that stood out:

Too Much Dependency at the Cost of Originality:

Mahim Maher, Editor, Aaj.tv, who has trained many journalists (this writer included), points out that in her newsroom (which is attached to a TV channel) a lot of the work involves translation and calling up reporters for fresh developments. “I’m not keen on the team using AI for these actions. It weakens their muscles to investigate, inquire, probe and learn how to ask questions.”

“We are focusing on writing better and with more clarity on complex issues and for that the writing muscle and independent thinking has to be developed and encouraged… Nothing can beat a smart subeditor writing something funny (for a social media post) because they understand the context,” she says.

However, the opinion of Zain Siddiqui, Assistant Editor at Dawn.com, is that it can be used as a good brainstorming assistant. “They can be a generally good tool to bounce ideas off and open a window to new ways of thinking about a problem.”

The A Is Not for Accountability:

Most editors I spoke to said they are still exploring the world of AI, but based on their experiences so far, one issue was common. You cannot trust the bots.

Abdul Sattar Abbasi, News Editor, Dawn.com, during his exploration of multiple conversational bots, found the same flaw. “It doesn’t do well with facts and tries to fill factual gaps with outright falsehoods. The drafts it produces, while really, really well written, require a human pair of eyes.”

In fact, the AI itself is quite cognisant of the fact that humans have to bear all the responsibility in a newsroom. As Sattar reports, “When I asked what it could do in a newsroom, it ended with a disclaimer: ‘Remember that while I am a powerful AI tool, I am not perfect and should not replace human judgement. Be sure to double-check my work and use my assistance in conjunction with your team’s expertise.’”

With such a gaping trust deficit, many editors are sceptical about bringing AI into the newsroom, even with its strengths, such as writing scripts for videos, checking for typos, and coming up with graphics and designs. “I have seen ChatGPT manufacture information in some cases,” says Siddiqui. “Although we may be tempted to think of them as a good and quick resource for brief information and analysis on pre-existing topics, if you can’t fully trust the information they are providing, are they really as helpful as we would like to believe?” He does leave room for the possibility of improvement. “This may quickly change as AI systems grow more sophisticated and reliable. But as journalists, we will still need to be accountable for the work we expect them to do.”

Sattar is less wary of AI becoming a team member, noting that chatbots like ChatGPT (in their current shape), do two things exceptionally well: English composition and article structure. He says that in a newsroom setup, it can draft articles as well as the best subeditor, provide outlines and even suggest headlines and assist with checking grammar and make copy more coherent. “Am I worried about whether it will replace humans? Yes. The tech is powerful and disruptive, and job displacements are inevitable, especially at the rate in which it is progressing.”

I, however, am not sold on the bots just yet. As an editor, I would much prefer efficiency and speed over every other aspect of the newsroom – except for two very key components of the news: accuracy and nuance.

So will bots replace reporters in the field? No. Can it take over the jobs of subeditors trained to add nuance with great care when it comes to topics like the military (who do we name and when, for example) or blasphemy? No. Will AI be able to come up with the questions to ask Bilawal Bhutto Zardari for an interview? Sure, and probably some good ones. But it will not be a match for a journalist who is going to react during the interview, study Bilalwal’s body language and tone, and find a peg that could not have been anticipated by a bot.

Having said this, we should not miss the point. AI does have something to offer. According to a New York Times report, major international publishers are already pulling together task forces to weigh options, making it a priority to study AI’s impact on journalism and digital strategy.

Every newsroom in Pakistan should be exploring how to make optimal use of AI in the current media set-up. Especially since by the time this article is published there could well be versions out there that are leaps and bounds ahead of the current bots eerily mimicking human responses and instincts.

Zahrah Mazhar is Managing Editor, Dawn.com. Instagram: @zeeinstamazhar

Comments (0) Closed